Definition of AI FinOps

AI FinOps is the practice of managing AI spend in a way that balances business value, financial accountability, operational control, and technical efficiency.

Why AI Requires a Different FinOps Lens

Traditional cloud cost management is already complex, but AI introduces a new level of variability and uncertainty. Unlike more predictable infrastructure patterns, AI usage can shift quickly based on user demand, prompt design, model choice, and experimentation cycles. As a result, organisations need a more deliberate operating model to manage both cost and value.

AI introduces several challenges:

- Variable usage AI costs can change quickly because usage depends on prompts, traffic patterns, model behaviour, and how often workflows are triggered.

- Harder forecasting AI spend is more difficult to predict because small changes in request volume, context length, or model selection can significantly change cost.

- Model choice trade-offs Choosing between models means balancing cost, speed, and accuracy rather than simply selecting the most powerful option.

- Prompt and context cost Longer prompts and larger context windows can quietly increase inference cost even when the user experience appears unchanged.

- Experimentation risk AI experimentation can create uncontrolled spend when teams test multiple models, prompts, and workflows without clear financial limits.

- Governance and compliance overhead AI introduces additional cost and complexity through approvals, audit requirements, data controls, retention policies, and regulatory expectations.

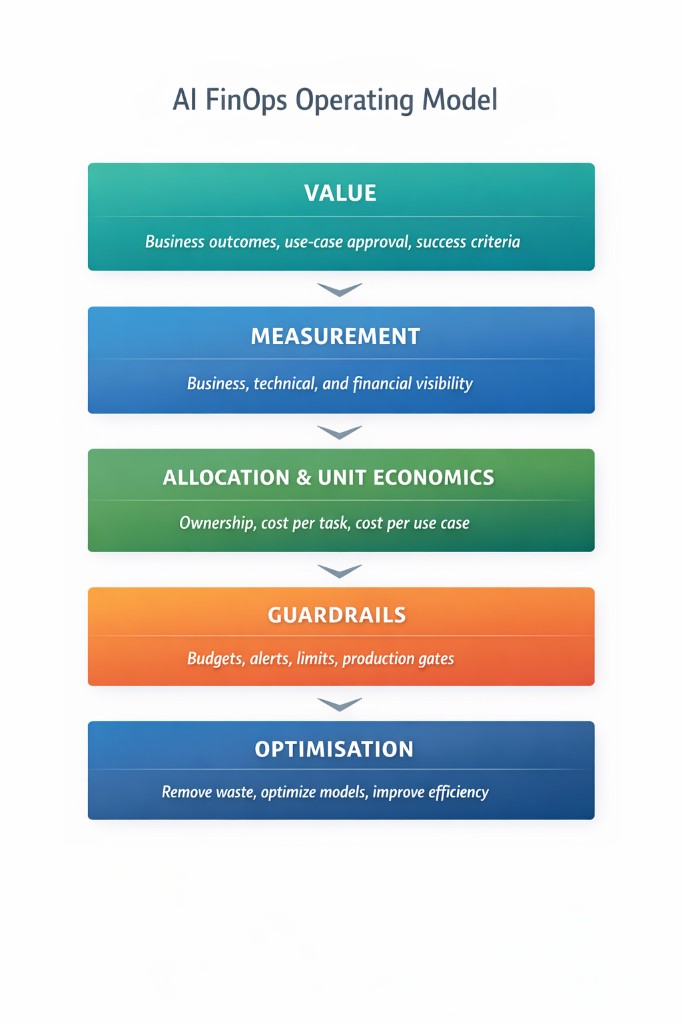

The diagram below summarises a five-layer AI FinOps operating model that connects value, visibility, ownership, guardrails, and optimisation, so teams can move from enthusiasm to accountable adoption.

A Practical AI FinOps Framework

A practical AI FinOps framework can be structured across five layers: value, measurement, allocation and unit economics, guardrails, and optimisation. Together, these layers help organisations move from AI enthusiasm to sustainable and accountable adoption.

Layer 1: Value

This layer ensures AI is tied to real business outcomes rather than isolated technical experimentation. It helps teams define why a use case exists, what success looks like, and how value will be measured. Without this layer, organisations risk investing in AI activity without a clear return or strategic purpose.

Layer 2: Measurement

This layer creates visibility across the business, technical, and financial dimensions that matter most. It helps teams understand how AI is being used, what it is costing, and whether it is delivering meaningful results. Without measurement, organisations may see usage growth without gaining clarity on performance, value, or efficiency.

Layer 3: Allocation and Unit Economics

This layer helps organisations assign AI costs to the right teams, products, features, or use cases. It turns broad spend into understandable unit economics, such as cost per task, cost per workflow, or cost per customer interaction. Without allocation, AI costs remain difficult to own, compare, optimise, or scale with confidence.

Layer 4: Guardrails

This layer prevents AI experimentation from turning into uncontrolled or unaccountable spend. It introduces budgets, limits, alerts, and approval points so teams can innovate with discipline rather than without oversight. Without guardrails, organisations may move quickly in the short term but create financial and operational risk over time.

Layer 5: Optimisation

This is where teams improve AI economics once they have enough visibility to make informed decisions. It focuses on reducing waste, improving efficiency, and matching the right model and design choices to the right use case. Without optimisation, organisations may continue scaling AI usage without improving cost-effectiveness or business value.